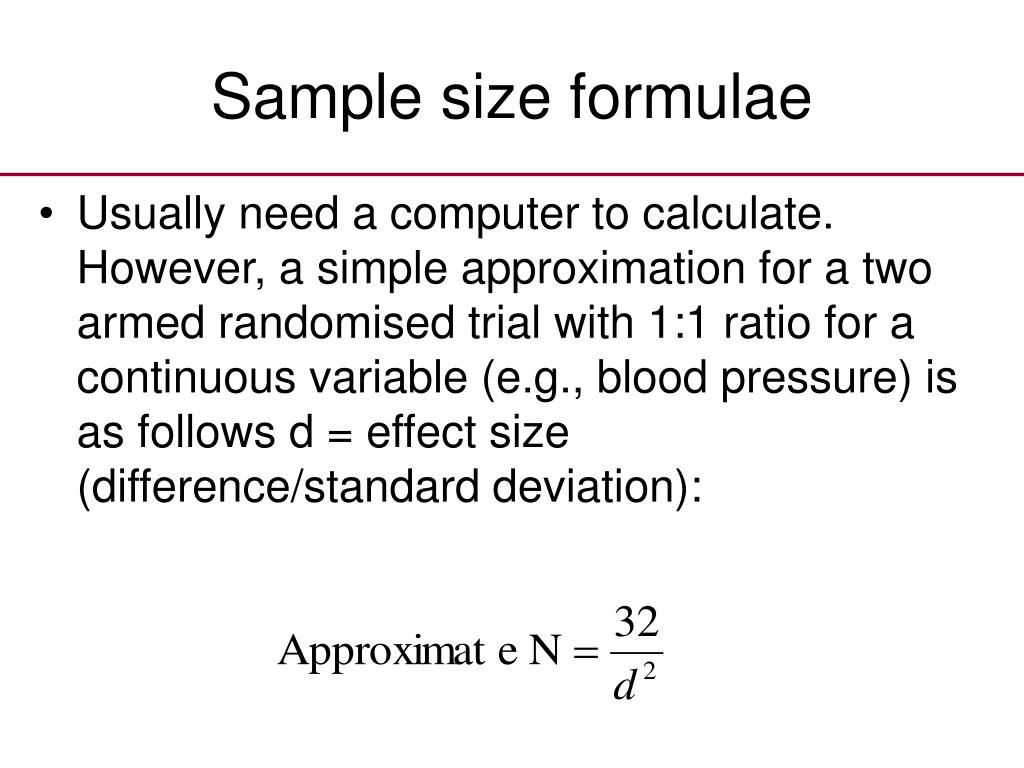

But no statistical test would say there was a “significant difference,” because there is a reasonable chance that with two identical coins you would get just this outcome. If you flipped two coins, one of which had both sides heads and the other both sides tails, four times each, you’d obviously get four vs. Let’s start by referring back to last month’s coin analogy. This month we are going to explore the concept of sample size and discuss ways to read between the lines when analyzing study results. Utilize a very small sample and you’ll have the opposite problem: you’ll fail to statistically prove even important differences. Use very large samples when comparing two treatments and you will find “true” differences so small as to be unimportant.

From my experience with pilot data and analyzing subsets of datasets or presenting data on an ongoing study, correlations with 20-40 subjects can be markedly different than when you have 80-100, I’ve even seen correlations between two tasks going from -.70 to +.40 when the observations were ’s also important to identify outliers, even with larger sample sizes an outlier or two can have a large effect on the magnitude of the correlation, since this is least squares after all.The importance of sample size is well known in medical research. The comment from Chris Draheim in a thread, "What is the minimum sample size to run Pearson's R?", on ResearchGate also highlights the instability of small samples: "I wouldn’t trust any correlation without at least 50 or 60 observations, with 80-100 being around where I feel comfortable. In other words, we should try to obtain a larger sample whenever possible. This would be a trivial solution in this case as it means I have to poll the entire population (the student cohort is <250). Here, I would also like to reference a paper on "At what sample size do correlations stabilize?", for which results indicate that in typical scenarios the sample size should approach 250 for stable estimates. It takes into account the observed sample correlation coefficient, sample size and confidence level (typically 0.95). There are online calculators and also a package in R that can be used for computing for the confidence interval of the correlation coefficient. Of course, if we want to be conservative, we can adjust the threshold of which we consider a strong correlation, while considering the confidence interval of correlation coefficient. So if we choose to focus on a population that is homogeneous, we might not need a large sample size to reflect the correlation. A smaller sample with high homogeneity will display a greater correlation coefficient than a large sample with low homogeneity (high heterogeneity). whether the sample is randomly selected and representative of the stratification of the population).

This goes to show that what matters more is understanding the homogeneity of the population and how we perform the sampling (i.e. And this shows that having a large sample size doesn't mean that we are more likely to observe stronger correlations where in this case, a larger sample size actually weakens the correlation. So in the event that we actually only polled the sample of respondents in bootstrapped sample 6 (to represent the whole population), we would have made a conclusion that there is a strong correlation between those variables. Even though the sample size is now smaller, there are strong correlations observed for bootstrapped sample 6 (school v math, school v humanities, math v science) and sample 10 (school v math). I then performed bootstrapping and selected random samples of 50 respondents ten times from the total pool of survey respondents.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed